Webcam for Emotion Prediction using Machine Learning in Python

Hello Learners, in this tutorial, we will be learning about making an emotion predictor using a webcam on your system with machine learning in Python. For this, we need to have a dataset, a camera accessible by the system. Furthermore, we will go ahead with predicting the emotions. We will majorly focus on the common emotions (viz. Angry, Happy, Neutral, Sad).

Emotion Prediction using ML in Python

In this model, we would try predicting the emotion based on facial expression.

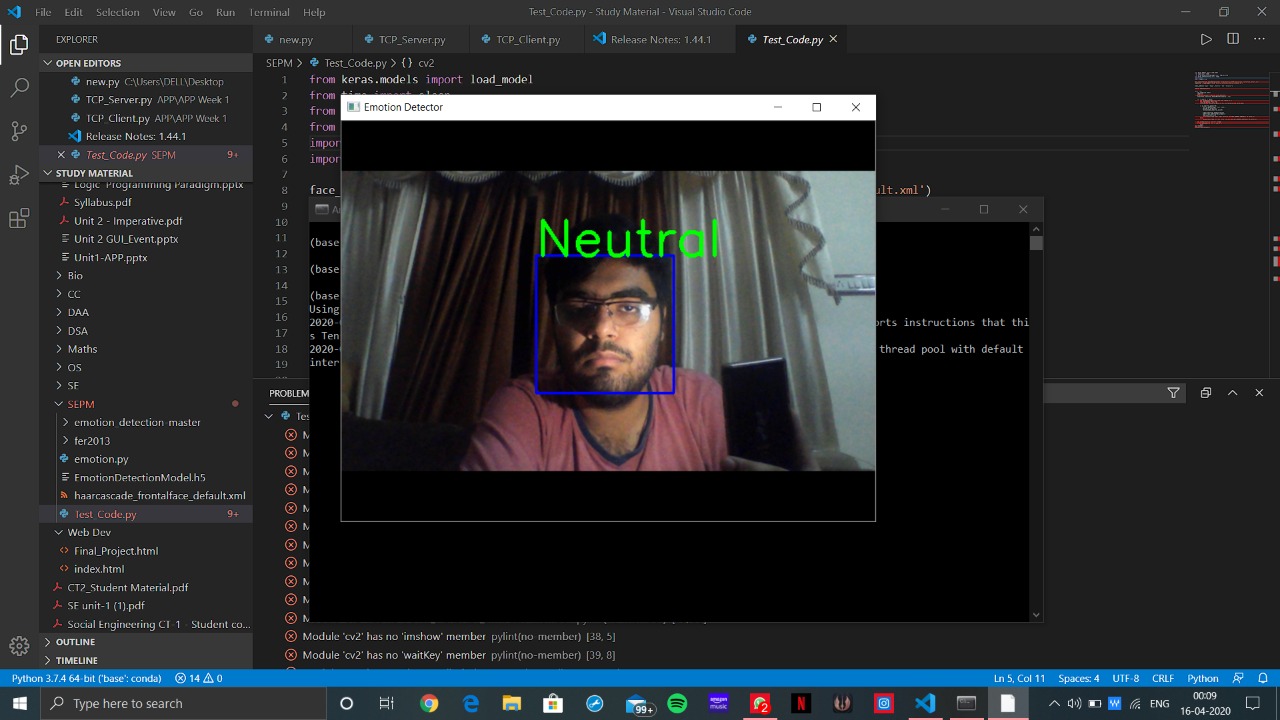

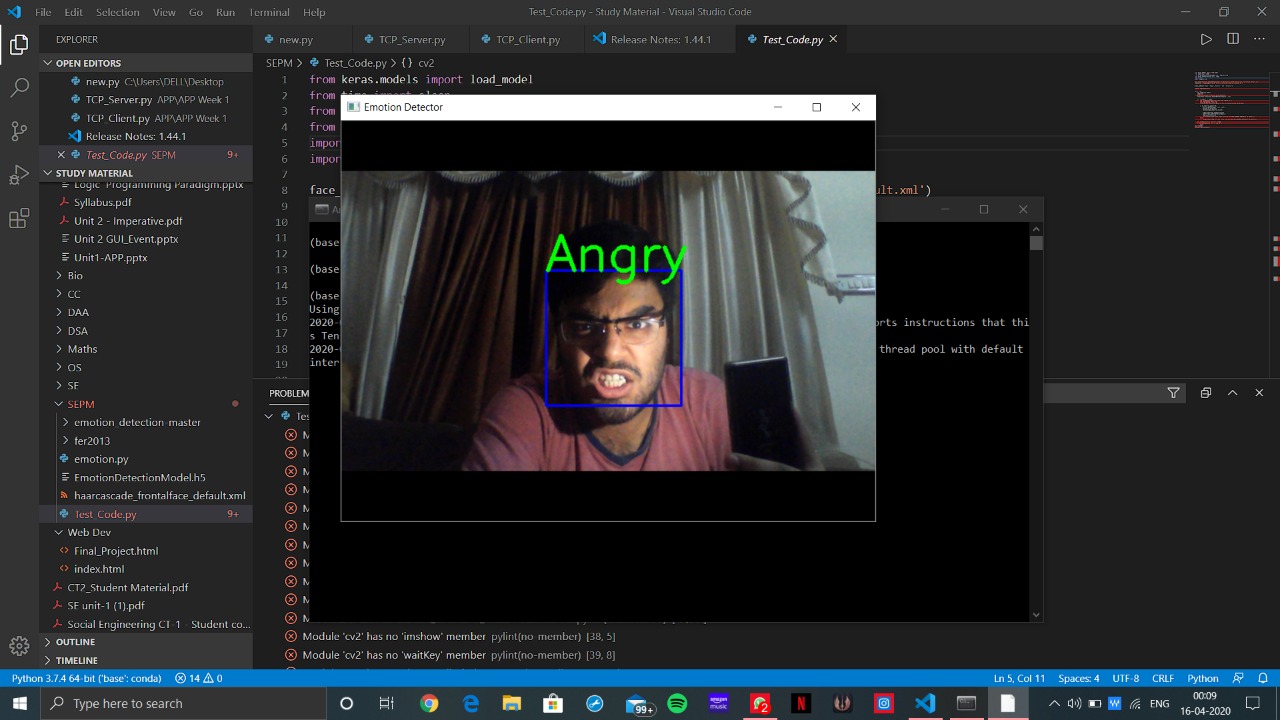

A sample to grab your attention:

So this is how our result at the end of this module would appear.

Let’s get started…

First of all, make sure that you have the following Python libraries installed to your system, try updating to their latest version if possible.

from keras.models import load_model from time import sleep from keras.preprocessing.image import img_to_array from keras.preprocessing import image import cv2 import numpy as np

To train this model we need a large dataset with a lot of examples. I will use the haarcascade_frontalface_default.xml dataset for training the model. This dataset is easily available on the internet, download it from the link above.

face_classifier=cv2.CascadeClassifier('/home/sumit/Downloads/haarcascade_frontalface_default.xml')

classifier = load_model('/home/sumit/Downloads/EmotionDetectionModel.h5')

Now define the various classes of emotions (viz. Angry, Happy, Neutral, Sad) and also set the video source to default webcam, which is easy for OpenCV to capture.

class_labels=['Angry','Happy','Neutral','Sad'] cap=cv2.VideoCapture(0)

The next step is to capture an image. The function that I will be using is read(). This returns a frame captured from the webcam:

- The frame read

- Code being returned.

As almost of functions in OpenCV are into greyscale we need to convert the frames received into the same.

At last, we return the location of the rectangle, its height, and breadth (x, y, x+w, y+h). Using the built-in rectangle() function a rectangle is drawn around the detected face in the captured frame.

while True:

ret,frame=cap.read()

labels=[]

gray=cv2.cvtColor(frame,cv2.COLOR_BGR2GRAY)

faces=face_classifier.detectMultiScale(gray,1.3,5)

for (x,y,w,h) in faces:

cv2.rectangle(frame,(x,y),(x+w,y+h),(255,0,0),2)

roi_gray=gray[y:y+h,x:x+w]

roi_gray=cv2.resize(roi_gray,(48,48),interpolation=cv2.INTER_AREA)

if np.sum([roi_gray])!=0:

roi=roi_gray.astype('float')/255.0

roi=img_to_array(roi)

roi=np.expand_dims(roi,axis=0)

preds=classifier.predict(roi)[0]

label=class_labels[preds.argmax()]

label_position=(x,y)

cv2.putText(frame,label,label_position,cv2.FONT_HERSHEY_SIMPLEX,2,(0,255,0),3)

else:

cv2.putText(frame,'No Face Found',(20,20),cv2.FONT_HERSHEY_SIMPLEX,2,(0,255,0),3)

cv2.imshow('Emotion Detector',frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

In the above while loop, we try and classify the face captured into one of the various types available. If none of the types matches we show an error “No Face Found”.

For cleaning everything up:

cap.release() cv2.destroyAllWindows()

Let’s do some Testing!!

Let us now testing this model with different facial expressions and see whether we get the right results.

Clearly, it detects my face and also predicts the emotion correctly. Test this model in your system on different people. I hope you enjoyed learning with me, happy learning ahead.

Also read: Predict food delivery time using machine learning in Python

Your Post Is Marvellous.It gives a nice intuition and a great insight as to how we will code to find human emotions.Although I Have One Question:-“You have used keras for this model.Can we use any other algo for this model? ”

Pls Reply

Thank you

if np.sum([roi_gray])!=0:

roi=roi_gray.astype(‘float’)/255.0

roi=img_to_array(roi)

roi=np.expand_dims(roi,axis=0)

Can you please explain the exact working of these lines?