LSTM in deep learning

Long Short Term Memory(LSTM) is a recurrent neural network(RNN) architecture. It has feedback connections, unlike the other neural networks which have feedforward architecture to process the inputs. This helps it to process data in videos, text files, speech or audio files all these sequences in data to enable itself to predict a new output or a pattern recognition in text files.

Examples:

- Youtube automated subtitles when they listen to characters speaking.

- A speech analyzer in your virtual assistant

- Gboard predictive text

LSTM proposed by Sepp Hochreiter and Jürgen Schmidhuber to deal with exploding and vanishing gradient problems. The LSTMs have input gate, output gate, a cell and a forget gate. LSTM networks are the most effective solution.

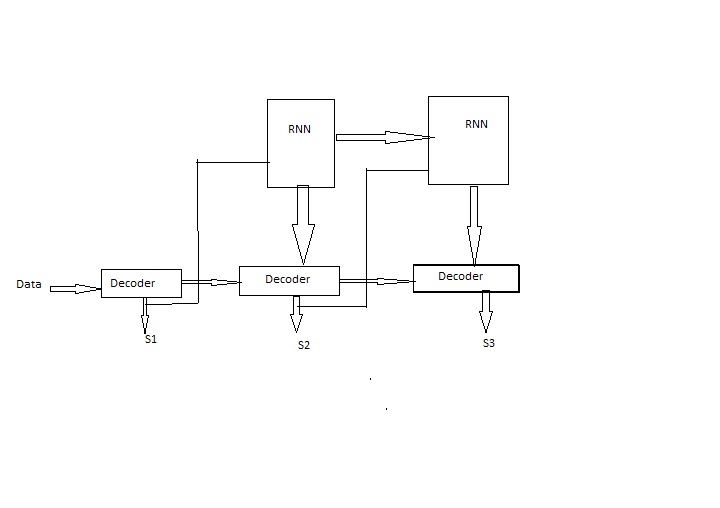

Architecture of LSTM

LSTM can be visualized, understanding a detective’s investigation in a crime. The first time he visits the crime scene he deduces the motive and tries to chase why and how it may have happened.

If the victim died due to drug overdose, but the autopsy says that death as a result of powerful poison. Alas! The previous cause of death is forgot and so all the facts considered.

There could be a scenario the victim committed suicide but later it is found out that he was the wrong target as a result he died. We collect the scraps of information and base a final scenario to catch the killer and the final output is a successful investigation.

Let’s get into the architecture of the LSTM network:

That’s it for now. More in the next post.

Leave a Reply