Perceptron Neural Network for Logical “OR” Operation in Python

This post deals with a short introduction to neural networks. Then, implementation of training a simple perceptron neural network for the logical “or” operation in Python.

What is a Neural Network?

A neural network or more precisely, and artificial neural network is simply an interconnection of single entities called neurons. These networks form an integral part of Deep Learning.

Neural networks can contain several layers of neurons. Each layer contains some neurons, followed by the next layer and so on. The first layer takes in the input. Each layer, then performs some operation on this input and passes it on to the next layer and so on. The final layer gives us output. By training the network using large amounts of data, we can optimise the network to produce the desired results.

Most layers also contain a bias value. These are values passed on as input to the next layer, though they are not neurons themselves

A Neuron – The Basic Entity

A neuron basically performs the following operations

- Accepts input from all connected neurons and bias value from the previous layer

- Based on the initial or previously learned data(as the case may be), it applies weight to each input and adds them up

- Apply an activation function on the value

- After all neurons in the layer are done, pass on this data to the next layer

The Weight

Weight is a variable that keeps changing during the training period of a neural network. It basically describes the relationship between the current neuron and the neuron from which it is receiving the input. The network learns this relationship based on past data processing.

Activation Function

An activation function basically operates on the added value of the neuron and aims at limiting the value between a lower and upper limit. Most functions, such as the sigmoid function, tend to limit the values between 0 and 1. There are a number of such standard activation functions. Programmers may also develop their own activation functions if necessary. The value returned by this function is the final value of that neuron.

This is basically the work of a neuron. The neurons are networked and structured in such a way, so as to perform the required operation as precisely as possible.

Training a Neural Network

Training a neural network involves giving it data, both input and output several times. The network uses this data to gradually adjust its weights and bring its output closer and closer to the desired output.

The weight modification is one of the most important processes, and a method called “backpropagation” is performed to analyse which wight was better and so on. In this implementation, however, we keep things simple. We don’t implement backpropagation and will not be necessary for our problem statement.

A parameter called “Learning Rate” is also specified, which determines at what magnitude of steps the network learns. That is, in small steps of by jumping huge steps. 0.5 to 1 is a good value for this implementation.

The formula we use to reassign the weights here is,

NewWeight = OldWeight + (Error * Input * LearningRate)

where, Error = ExpectedOutput – ActualOutput

Perceptron

Using a perceptron neural network is a very basic implementation. It uses a 2 neuron input layer and a 1 neutron output layer. This neural network can be used to distinguish between two groups of data i.e it can perform only very basic binary classifications. It, however, cannot implement the XOR gate since it is not directly groupable or linearly separable output set. (Refer to this for more)

Using Perceptron Neural Network for OR Operation

Consider the following program using a perceptron neural network,

import numpy,random,os

lr = 1

bias = 1

weights = list()

for k in range(3):

weights.append(random.random()) #Assigning random weights

def ptron(inp1,inp2,outp):

outp_pn = inp1*weights[0]+inp2*weights[1]+bias*weights[2]

outp_pn = 1.0/(1+numpy.exp(-outp_pn)) #Sigmoid Function

err = outp - outp_pn

weights[0] += err*inp1*lr #Modifying weights

weights[1] += err*inp2*lr

weights[2] += err*bias*lr

for i in range(50): #Training With Data

ptron(0,0,0) #Passing the tryth values of OR

ptron(1,1,1)

ptron(1,0,1)

ptron(0,1,1)

for x,y in [(0,0),(1,0),(0,1),(1,1)]:

outp_pn = x*weights[0]+y*weights[1]+bias*weights[2]

#Based on the trained wieghts

outp = 1.0/(1+numpy.exp(-outp_pn))

print x,"OR",y,"yields:",outp

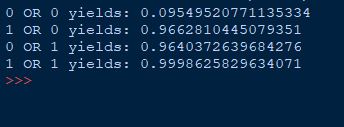

For one of the runs, it yields the following truth table,

The values are hence almost 1 or almost 0.

The number of loops for the training may be changed and experimented with. Further, we have used the sigmoid function as the activation function here.

Note that, during the training process we only change the weights, not the bias values. This is a very important aspect of a perceptron. For some more advanced implementations, try Binary Classification using Neural Networks

Leave a Reply