Identifying Tweets on Twitter in Python Using Machine Learning

We deploy a model that identifies whether a tweet is positive or negative. This is a generalized model and thus can be used for any similar purposes in natural language processing.

Predictions based on the nature of texts come under ‘Natural Language Processing’.There are certain specific libraries used to classify lengthy text files and sort them accordingly. This is a bit different than simple classification and prediction algorithms.

Prerequisites:

- You need to have a dataset file with a .tsv extension.

- Set the folder as a working directory, in which your dataset is stored.

- Install Sypder or any similar working environment. (python 3.7 or any latest version)

- You need to know the Python programming language and Natural Language Processing.

Step by step implementation:

Let us look at the steps to identify the nature of the tweets. Make sure that you have checked the prerequisites to this implementation.

1. Importing the library

First of all, import the libraries that we are going to use:

import numpy as np import matplotlib.pyplot as plt import pandas as pd

2. Importing the dataset

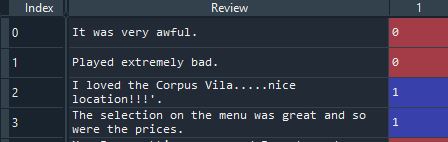

The dataset consists of two columns, one is for the tweets and the second one is a ‘0’ or a ‘1’, specifying whether the tweet is positive or negative. The dataset here is going to be a ‘.tsv’ (Tab Separated Values) file. The reason behind not using a ‘.csv’ (Comma Separated Values) file here is that tweets usually contain a lot of commas. In a ‘.csv’ file, every value separated by a comma is taken as a separate column.

dataset = pd.read_csv('Tweeter_tweets.tsv', delimiter = '\t', quoting = 3)

‘quoting =3 ‘ specifies that we ignore the double quotes (punctuation) in the tweet.

3. Filtering the text

a)Removing non-significant characters

- We need to import a library, ‘re’.This library has some great tools to clean some texts efficiently. We will keep only the different letters from A to Z.

- The tool that will help us do this is the ‘sub’ tool. The trick is, we’re going to input what we don’t want to remove. Following the hat (^) is what we don’t want to remove in the tweet. We also need to add a space because the removed character will be replaced by a space.

- The second step is to put all the letters of this tweet in lowercase. We use the ‘lower‘ function for this.

import re

tweet = re.sub('[^a-zA-Z]', ' ', dataset['Tweet'][0])

tweet = tweet.lower()

tweet = tweet.split()

For example, ‘I loved the Corpus Vila…..nice location!!!’

output:

i loved the corpus vila nice location

b) Removing the non-significant words

- We need to import the ‘ nltk‘ library, which contains a lot of classes, functions, data sets, and texts to perform natural language processing.

- We also need to import a stopwords package, which we will be using in the later sections. And now we need to import the tools in the ‘ nltk ‘library. The tool is going to be a list of words that are irrelevant to predict the nature of the tweet.

- We will now use the ‘split’ function. Well, simply it splits all the different tweets into different words. Therefore, the Tweet (string) splits into elements of a list, where one word is one element.

import re

import nltk

nltk.download('stopwords')

from nltk.corpus import stopwords

tweet = re.sub('[^a-zA-Z]', ' ', dataset['Tweet'][0])

tweet = tweet.lower()

tweet = tweet.split()

tweet = [word for word in tweet if not word in set(stopwords.words('english'))]

c) Stemming

- And we will also do what’s called stemming which consists of taking the root of some different versions of the same word.

- Let’s start by importing a class ‘PorterStemmer‘.We need to create an object of this class as we are going to use it in the ‘for ‘ loop. So let’s call this object ‘psw’.

- Well, the first thing we’ll do is go through all the different words of the tweet.

- All right, now that we have our object created, we will use this object and the stem method here. We need to apply this stem method from our ‘psw’ object to all the words of our tweets.

import re

import nltk nltk.download('stopwords')

from nltk.corpus import stopwords

from nltk.stem.porter import PorterStemmer

tweet = re.sub('[^a-zA-Z]', ' ', dataset['Tweet'][0])

tweet = tweet.lower()

tweet = tweet.split()

psw = PorterStemmer()

tweet = [psw.stem(word) for word in tweet if not word in set(stopwords.words('english'))]

- Finally, we need to join back different words of this tweet list.

- We use a special function for this which is the ‘join’ function.

- We now create a sparse matrix by taking all the different words of the tweet and creating one column for each of these words. Now, we import a class, CountVectorizor from ‘sklearn’.

- Here, we’ll take all the words of the different tweets and we will attribute one column for each word. We will have a lot of columns and then for each tweet, each column will contain the number of times the associated word appears in the tweet.

- Then, we put all these columns in a table where the rows are nothing else than the 5000 tweets. So each cell of this table will correspond to one specific tweet and one specific word of this raw_model. In the cell, we’re going to have a number and this number is going to be the number of times the word corresponding to the column appears in the tweet.

- And actually, this table is a matrix, containing a lot of zeroes called a sparse matrix.

from sklearn.feature_extraction.text import CountVectorizer cvw = CountVectorizer(max_features = 9500) X = cvw.fit_transform(raw_model).toarray() y = dataset.iloc[:, 1].values

Leave a Reply