get_weights() and set_weights() functions in Keras layers

In this article, we will see the get_weights() and set_weights() functions in Keras layers. First, we will make a fully connected feed-forward neural network and perform simple linear regression. Then, we will see how to use get_weights() and set_weights() functions on each Keras layers that we create in the model. Here, I want to point out that the model shown here is of a very simple type and you can always make it more complex and powerful. Don’t worry, I will guide you around on how to do it. So, let’s begin!

get_weights() and set_weights() in Keras

According to the official Keras documentation,

model.layer.get_weights() – This function returns a list consisting of NumPy arrays. The first array gives the weights of the layer and the second array gives the biases.

model.layer.set_weights(weights) – This function sets the weights and biases of the layer from a list consisting of NumPy arrays with shape same as returned by get_weights().

Now let us make a fully-connected neural network and perform linear regression on it. First, import all the libraries required.

import keras from keras.models import Sequential from keras.layers import Dense, Activation import numpy as np import matplotlib.pyplot as plt

Create a small input dataset with output targets.

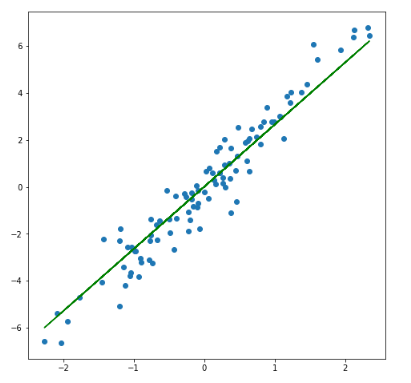

x = np.random.randn(100) y = x*3 + np.random.randn(100)*0.8

Create a neural network model with 2 layers.

model = Sequential() model.add(Dense(4, input_dim = 1, activation = 'linear', name = 'layer_1')) model.add(Dense(1, activation = 'linear', name = 'layer_2')) model.compile(optimizer = 'sgd', loss = 'mse', metrics = ['mse'])

Here, the first layer has 4 units(4 neurons/ 4 nodes), and the second layer has 1 unit. The first layer takes the input and the second layer gives the output. The linear activation function is used as we are making a linear regression model.

get_weights()

Use the get_weights() function to get the weights and biases of the layers before training the model. These are the weights and biases with which the layers will be initialized.

print("Weights and biases of the layers before training the model: \n")

for layer in model.layers:

print(layer.name)

print("Weights")

print("Shape: ",layer.get_weights()[0].shape,'\n',layer.get_weights()[0])

print("Bias")

print("Shape: ",layer.get_weights()[1].shape,'\n',layer.get_weights()[1],'\n')

Output:

Weights and biases of the layers before training the model: layer_1 Weights Shape: (1, 4) [[ 1.0910366 1.0150502 -0.8962296 -0.3793844]] Bias Shape: (4,) [0. 0. 0. 0.] layer_2 Weights Shape: (4, 1) [[-0.74120843] [ 0.901124 ] [ 0.3898505 ] [-0.36506158]] Bias Shape: (1,) [0.]

Did you notice the shape of the weights and biases? Weights of a layer are of the shape (input x units) and biases are of the shape (units,). get_weights() function returned a list consisting of Numpy arrays. Index 0 of the list has the weights array and index 1 has the bias array. The model.add(Dense()) function has an argument kernel_initializer that initializes the weights matrix created by the layer. The default kernel_initializer is glorot_uniform. Refer to the official Keras documentation on initializers for more information on glorot_uniform and other initializers. The default initial values of biases are zero.

Fit the model and see the newly updated weights after training the model.

model.fit(x,y, batch_size = 1, epochs = 10, shuffle = False)

Want to add your thoughts? Need any further help? Leave a comment below and I will get back to you ASAP 🙂

For further reading:

argmax function used in Machine Learning in Python

AutoEncoder implementation in tensorflow 2.0 in Python

Explain R Squared used In Machine Learning in Python

Hi Suchita,

Is this set_weights functions, basically leveraging the output from one model into another to improve accuracy? Also, if I use the weights into a similar model, I don’t need to get the previous dataset, only weights to use its output, right?

-Gunwant